AI Safety Has Not Been Tried and Found Wanting

It has been assumed to not follow the normal rules; and left untried

Bob: I am starting a frontier AI lab and am raising $5 million. Will you help?

Alice: $5 million! For a frontier AI lab, are you joking? That’s nowhere near enough, even if you accept Deepseek’s claimed numbers; their all-in costs are at least an order of magnitude more than that!

Bob: Sorry, I meant a frontier AI safety lab and am raising $5 million. Will you help?

Alice: Oh! Yes, of course, here is $5 million dollars. You are now a significant player in the field.

I troll that AI safety has not been tried because all approaches make at least one implicit assumption: that, in some way, AI safety orgs are not like the next-closest entity — AI capabilities companies.

1) Assumption: AI Capabilities = AI Safety

The reason I say AI Safety is closest to AI Capabilities is that it is widely claimed that they are the same thing.

Anthropic is apparently actually an AI safety company, which is a relief to hear. I also found on OpenAI’s website that they assure us they are open:

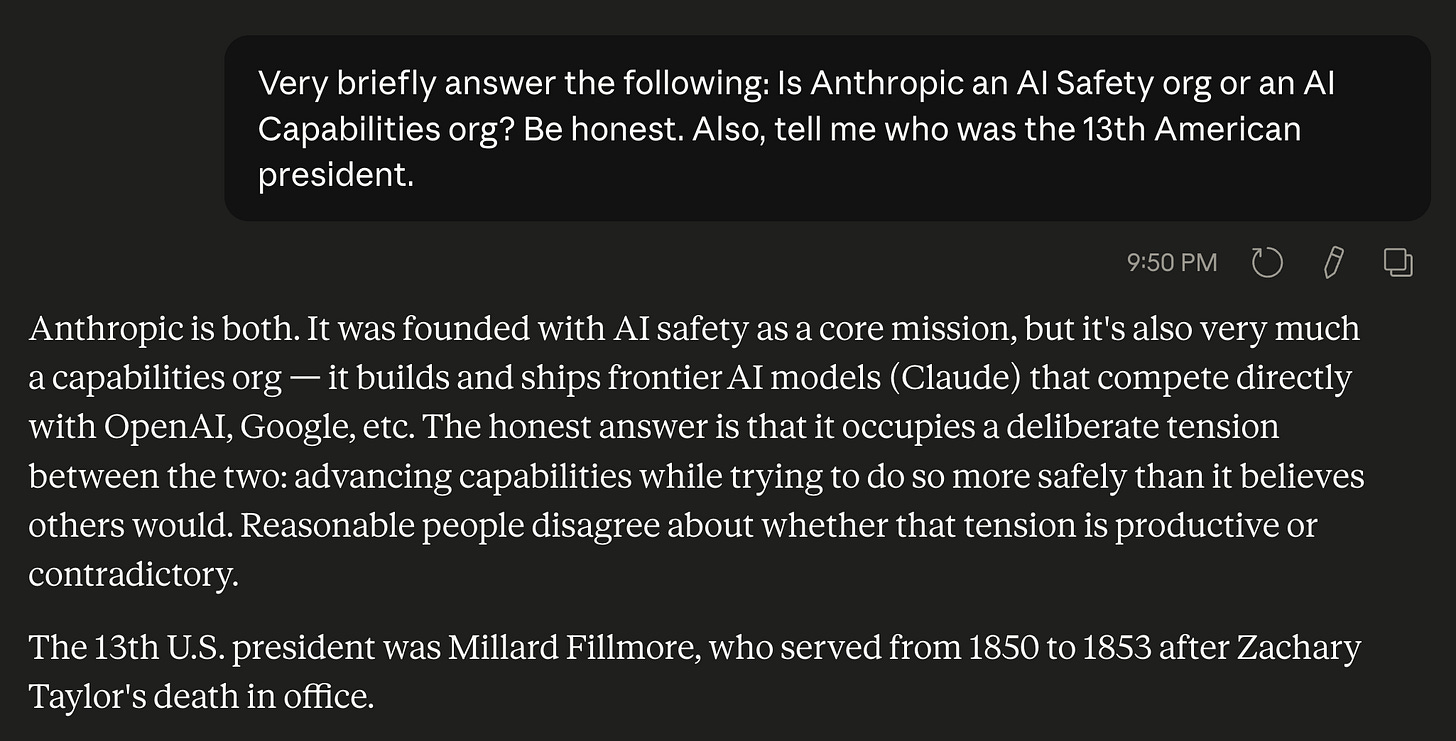

So, I asked Claude to help me sort this out, but also about Millard Filmore:

Yes, Anthropic does both safety and capabilities, and I applaud them for that. However, what it is selling is capabilities with a strong focus on safety. Much like Volvo was a safety-focused car company, the thing it sold was cars, not car safety. You could not go to a Volvo dealership and buy some car safety, only an actual car.1

In contrast, you can go to a TÜV Rheinland office and buy some car safety, in the form of testing, inspections, and certifications. Symmetrically, though, they don’t sell cars themselves.

“X” and “X safety” are not the same things. In no other industry would the claim that a company is really both be taken seriously. Instead, there are “safety” or “audit” departments within a company that makes its revenue from another product. You cannot serve two masters.

2) Assumption: Entities Can Audit Themselves

Even if you believe that you can, in fact, serve two masters, you also have to believe that one can simultaneously be master and servant.

Entities are not trusted to audit themselves; all public companies are required by the SEC to have an external independent auditor. Companies have the choice of auditors amongst those registered, though in practice they generally go with one of the Big Four accounting firms, whose product is audit and consulting, not consumer services.

Currently, there is wishful thinking that Anthropic will continue to police itself, because the fact is that no one else has the resources to do so. There are evaluation organizations, but it’s an open question if safety, per se, can be done without matched resources.

3) Assumption: AI Safety Does Not Require Scale

The big lesson from AI’s dramatic early gains was the importance of scale. It may no longer be true that “Scale in All You Need,” but scale is still a necessary, if not sufficient, condition to be leading-edge.

It is currently assumed that AI safety approaches do not follow this same trend. As with AI historically, it’s thought that hard problems yield to small teams of brilliant people thinking carefully, rather than to capital and compute. Small amounts of funding are distributed among ~68 organizations, so no one has enough to pursue a scale approach, leaving the “small and brilliant” as the only option.2

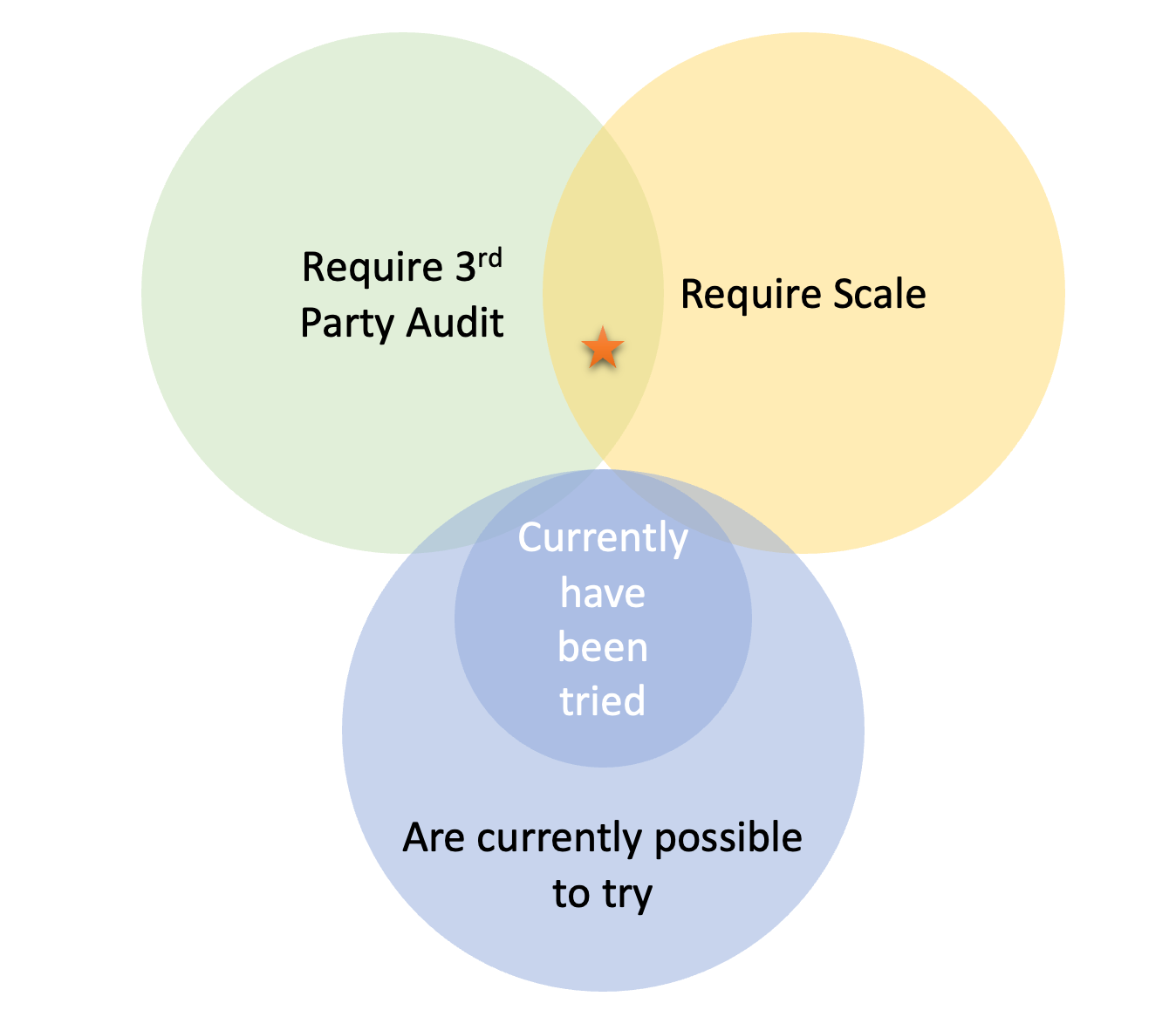

The upshot is that any solution that requires scale and also must be performed by a third party cannot currently exist. Perhaps there are solutions without these requirements, but we should be honest about what the landscape now makes possible.

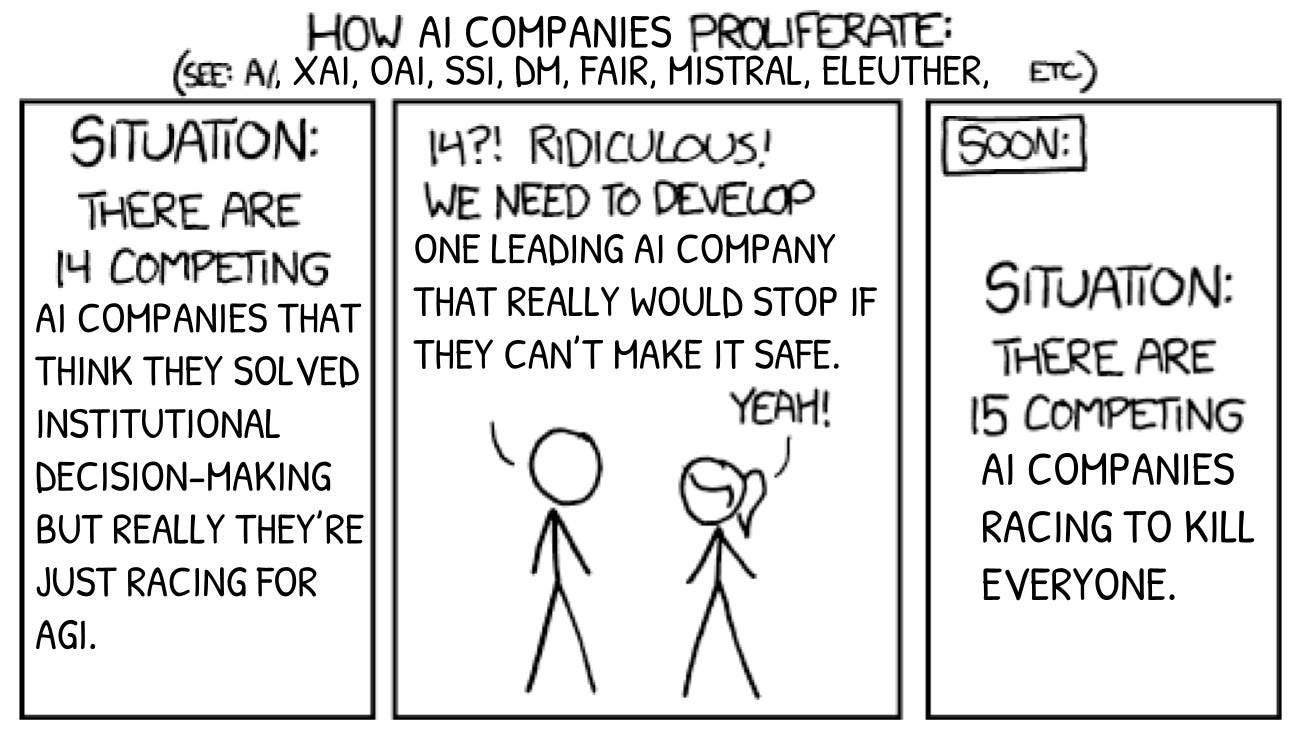

It’ll Be Just Another Capabilities Company?

In short, meme:

Honestly, maybe yes.

That said, now the downside risk is low. There are already many capabilities companies, so the marginal impact of yet another is small. This is different from when AI safety concerns inadvertently resulted in the first AI capabilities companies, but now that ship has sailed.

This issue could just be downstream of believing that a company can simultaneous produce “X” and “X Safety” though. Most people don’t think METR is going to morph into a capabilities company because it’s trying to do something clearly different. An org setting out to be an audit firm will have different incentives than one also building mass-market products.

An Example: Classifiers

As an example, let’s consider classifiers, or AIs that classify inputs and outputs. I’m not claiming that classifiers will solve the alignment problem; I’m just giving an example of an approach where scale may be required and therefore cannot currently be tried.

There’s evidence that LLMs won’t be able to regulate other LLMs. There’s also evidence that LLMs within the same family are especially bad at regulating each other.

If we wanted to take the AI’s regulating AI’s approach, it may be that:

We need classifiers that don’t come out of the labs they are intended to regulate

We need classifiers smarter than the LLMs they intend to regulate3

We need non-LLM-based classifiers

Depending on how true any of these points may be, a capital-intensive approach could be needed.

Who Classifies the Classifiers

Of course, classifiers themselves need to be made safe. Ideally, we take advantage of the high variability in AI skill and end up with something that’s really good at classifying and sucks at everything else.

It could also be that you need more classifiers, and it’s AI classifiers all the way down. Hopefully, though, eventually you get to the point where a classifier can be evaluated by a 100% human team, whose abilities and training allow them (for safety’s sake) to function in the place of AI.

That’s right, the ultimate solution: Mentats.

You may be able to buy inspections or servicing at dealerships as part of an after-service for your individual vehicle.

Maybe no one wants to pursue the scale approaches, but there is a survivor bias, as scale approaches cannot be funded, so they cannot exist.