Shots Fired in the Third War of Priors

Empiricists and Rationalists have fought for thousands of years. Now it's about AI.

The First War of Priors was between the Aristotelians, who combined sensory experience with intellect, and the Platonists, who thought reality existed in abstract ideas beyond the senses. The Platonists got the upper hand, and knowledge-seeking through contemplation was the prestige choice for 1800 years.

The Second War of Priors was between the British Empiricists, like Bacon and Hume, and the Continental Rationalists, like Descartes and Leibniz. The power shift to the Empirical side was the Scientific Revolution, though both would claim Newton as their own.1

The Third War of Priors involves the Bay Area Rationalists, who came to prominence after correctly predicting the importance of AI. Their opponents haven’t yet sufficiently coalesced to be named.

Bayes and Priors

I call these debates “Wars of Priors” because, fundamentally, they are about how one arrives at one’s prior likelihood.

With Bayesian reasoning, you start with a prior probability of something occurring, for example, seeing a polar bear in Texas. It’s not their typical habitat, so most people will have a low prior probability of this. If a child were to say they saw one around, you would still think there probably wasn’t a literal polar bear nearby.

However, if you’re in Texas, but at the Zoo, and that child says they saw a polar bear, you’re more likely to believe them. Your low prior probability of seeing a polar bear in Texas is updated by the information that you’re at the Zoo, and so the child’s testimony seems more likely.

In this way, Bayesianism updates priors with evidence to arrive at probabilities. It would be tempting to call it a successful synthesis of Empiricism and Rationalism, but because it doesn’t answer the question of where priors should come from, it’s only kicking the can down the road.

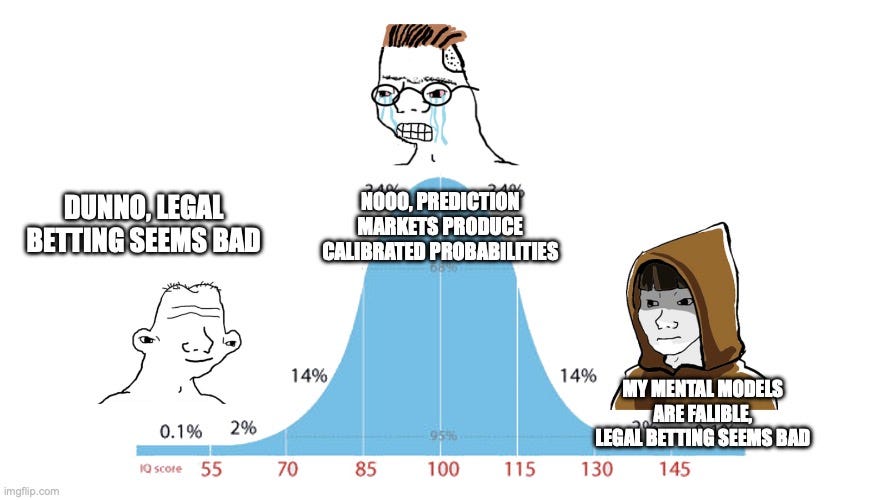

Rationalists believe you arrive at priors through a logical analysis; in a novel situation, map out the potentialities and reason what follows necessarily from the knowns.

Empiricists believe you arrive at priors through experience. When facing the unknown, search experience for appropriate comparisons and adjust confidence based on how well examples fit.

Shots Fired

The first shots in the Third War of Priors, possibly as friendly fire, were the “monkey’s paw” of prediction markets.

Rationalists reasoned that prediction markets would aggregate the wisdom of the crowds with skin in the game, and despite cultural guardrails on betting, would deliver valuable information about the world. The project was also important methodologically for Rationality, as it served as a proof of concept that human minds together can accurately predict the future. However, the markets haven’t been especially useful, and the largest impacts are new sports gambling and insider trading. Ironically, the Rationalists’ public bet on betting epistemology went sideways.

The AI Debate

The AI debate seems to have delivered one win to each side. The Rationalists reasoned early, with little available data, that AI would be important, and they were proven correct.

However, it’s seen as a win for Empiricism that LLMs, rather than being built, are grown through brute-force extraction of statistical patterns from huge amounts of data. Every attempt to build AI from first principles failed. So though Rationalists predict from logic, Claude speaks via empirical inferences that no one (including Anthropic) understands. This creates a tension in logically modeling a non-logical system.

In the most important debate, on whether AI will kill us all, Rationalists have a probability of doom, P(Doom), that ranges from 10-90%, with 30-50% being typical. These numbers usually come from thought experiments and extrapolation from past development.

Empiricists have lower probabilities, if they accept the probabilistic framing at all, and particularly chafe at ranges above 50%. They believe high-probability claims are rationalized vibes and forward-projected just-so stories, whose reference class could be Homo erectus, but also past failed predictions of technological apocalypses, such as overpopulation, peak oil, and nanobot grey goo.

The debate will be settled in the Empiricists’ favor if AI develops slowly, develops safely, or goes bad in finite ways that allow for learning. If the Rationalists are right, though, they won’t get to enjoy their victory, and neither will anyone else.

There were arguably many more wars along the way, but wars get named for catchiness, not accuracy.

**Rationalists believe you arrive at priors through a logical analysis; in a novel situation, map out the potentialities and reason what follows necessarily from the knowns.**

**Empiricists believe you arrive at priors through experience. When facing the unknown, search experience for appropriate comparisons and adjust confidence based on how well examples fit.**

This is why we fired Philosophers as decisions makers. Together in this context this is pure false dilemma. We need both -- in various amounts, at various times, and in various spaces -- to establish, refine, and build more "true" priors.